Limiting the Unlimited: Managing Risks and Safeguards in AI Development and Use

Issue 10, February 2026

Introduction

In recent years, the development and adoption of artificial intelligence (“AI”) have significantly transformed how people live, work, and do business. While AI offers significant opportunities, it also presents inherent risks across its development, implementation, and utilization.

In response, the Government of Indonesia is preparing a Presidential Regulation on Artificial Intelligence Ethics (“Draft Regulation”), which is expected to be issued later this year. The Draft Regulation builds upon ethical principles previously introduced under Ministry of Communication and Informatics (now known as the Ministry of Communication and Digital Affairs – “MOCDA”) Circular Letter Number 9 of 2023 on AI Ethics (“MOCDA Circular Letter”), and complements the principles set out in Law Number 27 of 2022 on Personal Data Protection (“PDP Law”).

Against this backdrop, this article outlines the key risks associated with AI systems under the Draft Regulation and highlights safeguard measures that stakeholders may need to implement to mitigate those risks.

AI Ethics

The Draft Regulation adopts core AI Ethics principles originally set out in the MOCDA Circular Letter, namely:

a. inclusivity;

b. humanity and respect for human rights;

c. security and user-protection;

d. accessibility and non-discrimination;

e. transparency;

f. credibility and accountability;

g. personal data protection;

h. sustainable development and environmental responsibility; and

i. intellectual property protection.

These principles are further outlined in AI Ethics Guidelines attached to the Draft Regulation. Importantly, the Draft Regulation expressly requires relevant stakeholders to adhere to these principles in the development, implementation, and utilization of AI systems.

Who Must Comply?

The Draft Regulation applies broadly across the AI landscape, including the following stakeholders:

1. Users

End users who directly interact with AI systems through interfaces or AI-based service;

2. Sectoral Actors, comprising:

· Data providers: parties providing data for the training, testing, or validation of AI models;

· AI developers: parties designing, developing, or conducting initial training of AI models, or substantially modifying them, including determining the architecture, algorithms, and training methodologies used to produce AI systems;

· AI system providers: parties providing access to AI models and supporting infrastructure, or integrating AI into products, services, or platforms; and

· AI system operators: parties implementing and operating AI systems within a specific context of use, such as in public services, the industrial sector, or certain institutions.

3. Ministries/Agencies

Legislative, executive, and judicial institutions at the central and regional levels, as well as other institutions established under laws and regulations with supervisory or regulatory mandates within their sectors.

4. Other stakeholders

Including parent companies or industry associations.

Risk-Classification of AI Systems

To promote responsible AI development and deployment, the Draft Regulation adopts a tiered risk-classification approach. Under this approach, AI systems are regulated based on the level of risk they may pose to human rights, safety, and broader public interests, with higher-risk systems subject to stricter oversight.

The Draft Regulation classifies AI-related risks as follows:

a. Unacceptable risk: AI systems which threaten or endanger user safety and human rights are prohibited. Examples include:

(i) the use of real-time facial recognition technology in public spaces without a clear legal basis;

(ii) AI-based social scoring systems that evaluate individuals based on behavioral, digital, or personal data to determine access to services or facilities.

b. High-risk: AI systems which process specific personal data under the PDP Law or may have a significant impact on human rights, safety, or essential public services. These systems are subject to enhanced requirements and supervision, including Data Processing Impact Assessment (“DPIA”) obligations under the PDP Law. Examples include AI systems used for medical diagnostic support and creditworthiness assessments.

c. Low-risk: AI systems posing minimal or no threat to human rights or safety. Such systems must still implement appropriate safeguards to ensure responsible use. Examples include public-service chatbots used by government institutions and AI-based product recommendation tools on e-commerce platforms.

Safeguard Measures to Implement

Recognizing these risks, the Draft Regulation requires Sectoral Actors to implement safeguard measures throughout the development, implementation, and utilization stages of AI systems. While the Draft Regulation does not prescribe detailed safeguards for Users, it emphasizes that Users remain responsible for ethical and responsible use of AI systems and avoid misuse.

In this regard, the Draft Regulation provides that the safeguard measures must, at a minimum, address the following aspects:

a. Benefit and protection for humans, the environment, and the state

b. Avoidance of harm and misuse

c. Transparency and accountability

d. Fairness and proportionality

e. Promotion of inclusivity and diversity

f. Security through system reliability and technical competence

g. Respect for intellectual property and culture

h. Governance and human control

Monitoring and Evaluation

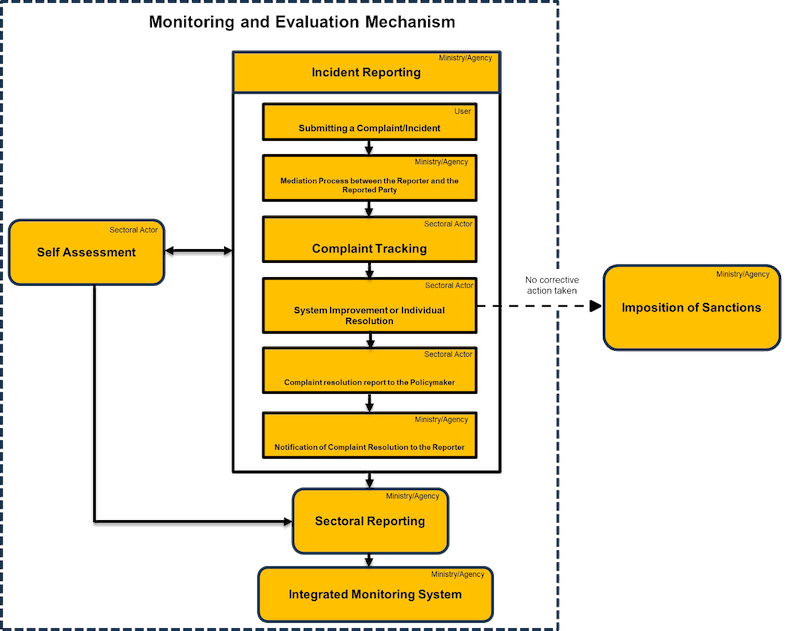

The Draft Regulation requires Ministries/Agencies and Sectoral Actors to perform monitoring and evaluation throughout the AI development, implementation, and utilization stages. These include conducting self-assessment and responding to incident reporting mechanisms, with the results expected to contribute to sectoral reporting and the eventual establishment of an integrated AI monitoring system, illustrated below:

What to Prepare?

The Draft Regulation reflects the Indonesian Government’s increasing focus on AI governance. Although the Draft Regulation may be subject to discussion or revision, several practical implications are already apparent.

Businesses deploying AI within their products or services should begin preparing to:

· Identify and classify AI-related risks;

· Implement appropriate safeguard measures;

· Conduct internal assessments as part of monitoring obligations; and

· Anticipate potential periodic reporting requirements.

The Draft Regulation also provides a two-year transition period for compliance, during which sector-specific guidance may emerge.

While the Draft Regulation does not expressly stipulate sanctions for non-compliance, enforcement risks may arise under existing Indonesian laws intersecting with AI activities, including Law Number 28 of 2014 on Copyright (Copyright Law), Law Number 11 of 2008 on Electronic Information and Transactions (as amended) (EIT Law), and the PDP Law. Accordingly, businesses should view AI Ethics compliance as part of their broader regulatory and risk management framework.

-----

Click the "download file" button to read the PDF version.

If you have any questions, please contact:

- Reagan Roy Teguh, Partner – reagan.teguh@makarim.com

- Demi Narendra Soegandhi, Associate – demi.soegandhi@makarim.com

M&T Advisory is a digital publication prepared by the Indonesian law firm, Makarim & Taira S. It informs generally on the topics covered and should not be treated as legal advice or relied upon when making investment or business decisions. Should you have any questions on any matter contained in M&T Advisory, or other comments in general, please contact us at the emails provided at the end of this article.